Being Real Is Becoming a Requirement for Creators in Instagram’s AI-Slop Economy

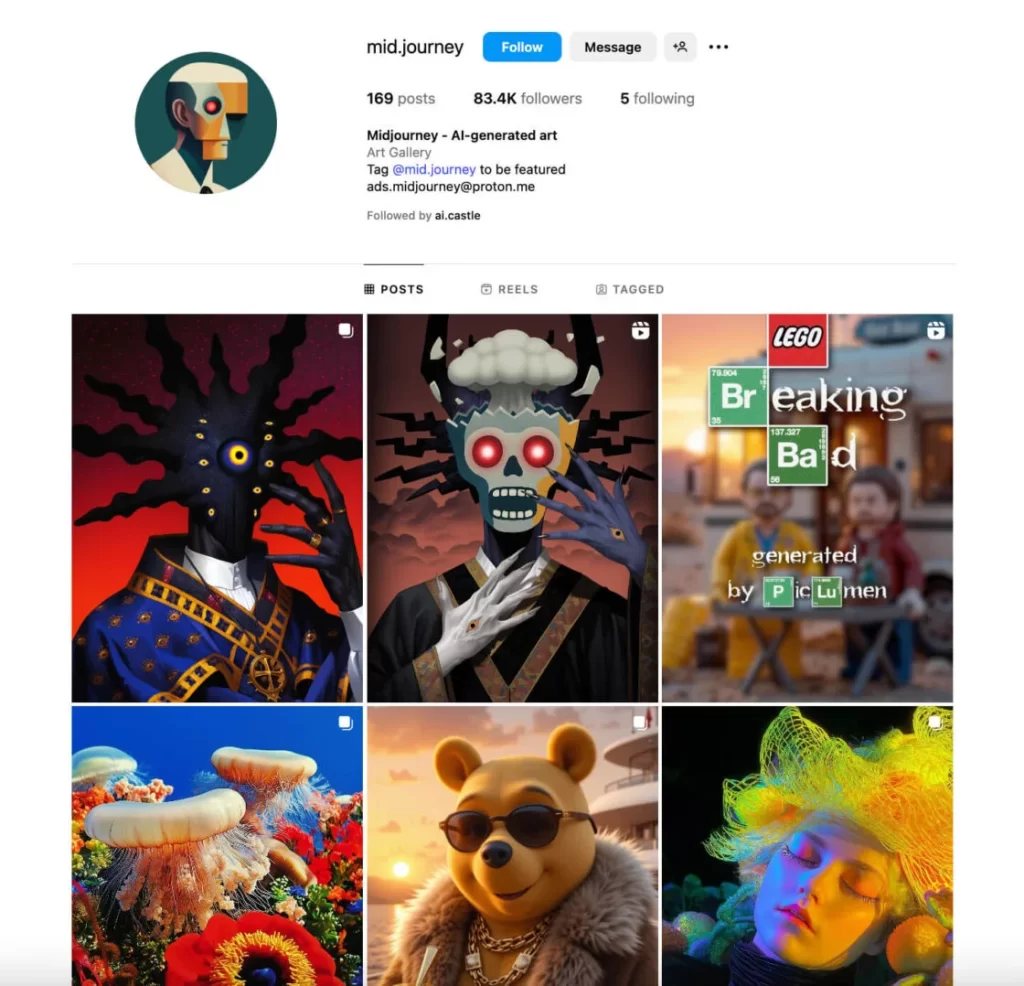

In 2025, AI-generated content quietly took over social media feeds. By 2026, even platform leaders are admitting it’s a problem. Adam Mosseri, head of Instagram, has acknowledged that authenticity is becoming harder to verify as AI content grows more realistic. The result: creators are now expected to actively prove they’re human.

Why AI Content Is Flooding Instagram?

AI tools make it easy to produce large volumes of content quickly. While early AI posts had obvious visual flaws, newer models now copy realistic styles, imperfections, and casual formats. This has resulted in feeds filled with low-effort but convincing AI-generated posts, often referred to as AI slop.

Instagram’s Response to the Growing AI Slop

Instagram says it is still working on tools that can detect and label AI-generated images, videos, and audio. According to Adam Mosseri, the goal is to give users more clarity about what they are seeing, but he also admitted that detection alone will not be enough as AI content becomes more realistic and widespread.

Instead of only trying to flag fake posts, Instagram is increasingly looking at the opposite approach: clearly identifying and highlighting real, human-made content. The idea is that as AI-generated posts flood the platform, it may be more practical to signal what is authentic rather than chase every piece of synthetic media.

Under Meta, Instagram is experimenting with AI labels, metadata signals, and creator-based indicators to provide context around content. However, Mosseri has made it clear that creators will also play a role in this system. Showing process, behind-the-scenes work, and real-time creation may become key ways for creators to build trust.

In short, Instagram’s plan is less about eliminating AI content and more about helping users recognize what’s real. As AI-generated media becomes unavoidable, the platform appears to be shifting responsibility—asking creators to actively prove authenticity while Instagram provides the tools to support that distinction.

Why Creators Now Have to Prove They’re Real?

With AI content becoming common, creators can no longer assume audiences trust what they see. To stand out, creators may need to show:

- Behind-the-scenes footage

- Their creation process

- Real-time filming, voice, or face

Authenticity is shifting from an expectation to a requirement.

Beyond visibility, this creates context. Viewers are more likely to believe content when they can see the effort, decisions, and time behind it. In an environment where AI can generate results instantly, process becomes proof.

Over time, audiences may start favoring creators who feel transparent and human, even if their content looks less polished. Authenticity is no longer just about honesty—it’s about demonstrating real involvement. In Instagram’s AI-heavy ecosystem, being real is quickly becoming a core part of a creator’s value.

What This Means for the Future of Instagram?

Looking ahead, this could reshape how creators build and maintain audiences. Feeds may favor consistency, presence, and personal context over perfectly produced visuals. Creators who regularly show up as themselves—sharing progress, failures, and real-time moments—are likely to build deeper trust and longer-lasting communities.

In the future, algorithms may also begin rewarding signals of authenticity, such as original footage, creator identity, and interaction patterns that AI struggles to replicate. As AI-generated content becomes the background noise of social media, human stories, effort, and credibility could become the real differentiators that decide who stands out and who fades away.

Related posts

-

Gmail’s Big Upgrade: Smarter Inbox With Gemini AIUncategorized 11 January 2026

-

Google Adds AI-Video Verification to Gemini AppAI 24 December 2025